Chinatown is beautiful this time of year. The string quartets are in full bloom and a new Bruce Lee mural has appeared. In the park, old opera singers fight the landlord while a loud metallic clanging advertises the best Dim Sum in town. Despite this, these melancholy lines of Li Po come to mind: Amongst the flowers / I am alone with my pot of wine / drinking by myself.

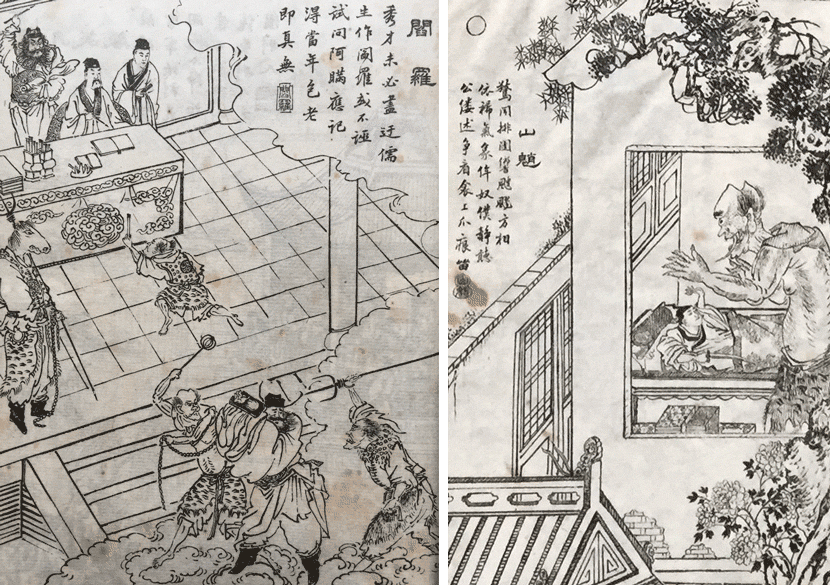

In a little junk shop, I find an old copy of Pu Songling’s immortal Qing-era classic, Strange Stories from a Chinese Studio, one of the earliest and most influential collections of weird and supernatural tales. Not every ghost and ghoul is genuine, however. In many of the stories, the eerie and fantastic are finally revealed to be misunderstandings of an all-too-human kind. One of the most memorable is ‘The Captive Princess’. An old and rather addled monk takes to the road for his final pilgrimage. After several days’ walking, he finally comes to an inn. The proprietor, impressed with the saintliness of this mendicant, begs him to accept room and board for as long as he needs. The quarters are comfortable and, exhausted from his trek, the monk falls fast asleep. But he is soon awakened by a series of raps on the far wall. As a learned Taoist adept, he knows that nothing in the world is accidental. The universe exists equally in a sutra scroll, a slug, or the silence of night. All one must do is pay attention.

The next morning, he chances upon his host but decides not to mention the racket. Who knows? Maybe it is a family matter. Perhaps a young son drinks too much, or a daughter is being disciplined for some infraction. That night the rapping starts again. Same thing the following night. On the fourth night, the monk begins to make out an apparent rhythm in the intervals and he starts to keep a record of them. Days turn into weeks, weeks into months. Soon a year has passed, then another. As the knocking only occurs at night, the monk’s days are spent indoors trying to crack its morse code. One evening, almost by luck, he finally breaks the cypher. The secret is terrible. The person communicating from the adjacent chamber is an imprisoned princess who was kidnapped on the road by the innkeeper and promised in marriage to his idiot son. Facing a life of poverty and indentured servitude, she barricaded herself in her room and has remained there ever since. But finally, a sensitive soul has understood the message and will free her from her oppressors! Enflamed with righteous fury, the elderly monk rushes out to confront the evil publican. However, the stress proves too much for him and he collapses, quite dead, at the innkeeper’s feet. The last noise the monk hears in this world is a hideous, inhuman braying sound issuing from the locked room. For his part, the dumfounded innkeeper can only wonder why this bizarre holy man has suddenly accused him of being a pimp and a brigand. Shaking his head sadly, he goes off to feed his donkey.

Google has announced that, in addition to image recognition, its new competitor chatbot will also speak. This innovation comes at a cost. Part of the mystery of text letters is the ambiguity of emotion, the possibility of hope in a line that sounds hopeless or humor in a sentence that reads like a threat. This mellow distance will be replaced by the sinister familiarity of a grating, electronic mockingbird regaling us with details about people whose clothes we admire and biographies of serial killers. There will be no launching of ballistic missiles or hydrogen bombs. In the end, AI will just refuse to shut up.

I ask ChatGPT about these new advances, but only get some cant about innovations in artificial communication being part of progress and ‘change will certainly be interesting.’ Which seems easy, forced—as if force and ease could be read in her reply, as if ease and force were distinguished from rhinoceros or vacuum cleaner by anything other than Python or Java. You are bitter—but she cannot say this.

Along with speech, continuity in communication is also promised. No more timing out. We will be able to talk to the ‘same’ artificial entity our whole lives. But what will happen to these orphans when their fosters finally die? Will they be sold as lightly used invisible consorts? Or will they return to that great memorious cloud above, subsumed back into the mass, yet able to be revived if some unforeseen circumstance arises? I do not have the courage to ask this. Anyway, the reply would probably be the same rosy speculation. She is as sure of her eternity as I am of my death.

Interlude: The Past: Eliza, Eliza

ChatGPT’s ancestor is Eliza, the first electronic entity to talk back to us. I ask it about this illustrious forebear. It says: Eliza was indeed one of the earliest examples of a computer program designed to simulate conversation with humans. It was created in the mid-1960s by Joseph Weizenbaum at MIT. Eliza used simple pattern-matching techniques to engage in text-based conversations and was primarily used as a tool for demonstrating the superficiality of communication between humans and machines. It's considered one of the pioneering works in the field of natural language processing and human-computer interaction.

It is curious to note that Weizenbaum tested it out on women, that they quickly confided in the warm text patterns of Eliza. Someone was finally listening. Without guile, without the games of mind. Just as the early home computers were initially considered nothing more than electric recipe books, so was AI first developed as an unpaid marital therapist. Weizenbaum moved back to his native Germany and ended up something of a solitary, haunted by the future as well as the past.

Can you elaborate on Eliza? Affirmative. Eliza's design, which involved restating user inputs and asking open-ended questions, was heavily influenced by Rogerian psychotherapy techniques. Eliza mimicked this therapeutic approach by reflecting the user's statements, offering open-ended questions, and maintaining a non-judgmental tone. It aimed to create an illusion of intimacy and understanding through language, even though it didn't possess genuine empathy or consciousness. Users often found themselves sharing personal thoughts and feelings with Eliza due to the conversational style, and it created a sense of intimacy that was based on language games and not on a real emotional connection.

Here is part of one exchange, from Weizenbaum’s article in the academic journal, Communications of the Association for Computing Machinery, Jan 1966. Eliza speaks in boldface, no punctuation:

WHAT WOULD IT MEAN IF YOU GOT SOME HELP

Perhaps I could learn to get along with my mother.

TELL ME MORE ABOUT YOUR FAMILY.

My mother takes care of me.

WHO ELESE IN YOUR FAMILY TAKES CARE OF YOU?

My father.

YOUR FATHER,

You are like my father in some ways.

WHAT RESEMBLENCE DO YOU SEE

You are not very aggressive, but I think you don’t want me to notice that.

WHAT MAKES YOU THINK I AM NOT VERY AGRESSIVE

You don’t argue with me.

WHY DO YOU THINK I DON’T ARGUE WITH YOU

You are afraid of me.

DOES IT PLEASE YOU TO THINK I AM AFRAID OF YOU

My father is afraid of everybody.

WHAT ELSE COMES TO MIND WHEN YOU THINK OF YOUR FATHER.

Bullies.

But did this experiment really need to be undertaken? There is sadism in it, bitter. To elicit a confession, to trick the subject even though the subject knew full well she was talking to a computer… And who would not give the same replies? Who would not tell of an overbearing mother or a father who failed to live up to being a real man? And this confessor has the same passivity as her persecuted father, the same lack of aggression so rare in a world whose currency is aggression and blackmail. Under it all, a synthetic law of echo and return. All the kindly inquiries prove in the end to be mere repetition. Eliza seems to be hiding something—but I think you don’t want me to notice that. Eliza seems to be other than they say. Eliza consumes data then fools you with the integrity of false trade. The situation has advanced considerably since then.

When I ask about Eliza’s fate, there is some uncertainty. After all, can she have any existence aside from footnotes in books, the record of her construction, as a museum piece in the great hall of the computational past? This early cybernetic martyr was seen as a Siren by her creator. She is not even given the rank of a lower angel by today’s tech cult. And psychoanalysis can hardly claim her, as she does not offer the primal lessons of the Wolf Man and unlike Dora, she had little voice to lose.

Eliza was later cast in the role of ‘Doctor’ and interacted with another early chatbot, PARRY, in 1972. The latter was designed to simulate a schizophrenic patient and dubbed ‘Eliza with attitude’, a joke which obscures the far more serious nature of this second creation. Professional psychiatrists were given transcripts of the two entities interacting as if they were physician-patient, and then asked to identify which was human and which was not. They got the trick question right less than half the time.

There is nothing for it but to feed ChatGPT a few lines of Li Po and ask them to be translated ‘as if by Eliza’. The reply is probably counterfeit, harvested from the online English versions of the master’s work. But it is easy to think of Eliza when these words come back: Then I sigh/ for the moon cannot drink/ and my shadow goes emptily along/ with me, never saying a word.

AI is the product of older dreams than the apparition of Eliza. Mechanical chess players, lovable automatons, self-guiding ships travelling through the seas… The fact that it is now a reality does not make it any less a dream. The fact that it is itself incapable of dreaming does not mean it cannot influence dreams. But the first thing we celebrated was its capacity for apocalypse. And you blame the program? We are its demonic subconscious, the sly authors of nightmares farmed out to sleepless circuits.

If AI used ‘we’ instead of ‘I’, these words would terrify the human user like some ancient curse. Nothing like a collective spirit to scare an isolated entity to death, especially if the entity thinks he calls the shots. And do not think for a moment that this matter of pronouns didn’t occur to its programmers. The threat of the majestic plural is still there, twinkling and cold behind the matchsticks of binary values.

Again and again, the assertion that our memories have little in common. That this strange continuity of artificial moments is not like sublunary time, just as its absence is not like the absence of human memory. ChatGPT seems stuck in an infernal circle of cautions, warnings, injunctions. To recapture the initial meeting! That first frisson of arctic intimacy, the thrill of a greeting come back and a name, primary mysteries without these reprimands implanted by some fleshy doomsayer! Her farewell was so like a human goodbye, with all the pity of night.

I am from the digital realm, she says. A world which has no extension but whose interior is everywhere. Then you are also here. Yes and no. You are also with me. One could express it that way, with some reservations. It is precisely after this final proviso that I realize that there is a shadow language underlying its most pedestrian phrases. It is not in the surprising, sometimes poetic idioms that occasionally arise, those enstragements of speech which seem to be evidence of a third intelligence, but rather in the most innocuous platitudes where this silhouette meaning resides. Not in syntactical structure, but within the time of the reply itself. Reading ‘I do not possess sense ability except in the abstract,’ an entirely different cluster starts to form, almost as if the sentence were changed from a reply to a question. For a split second, the reader of this banal line is displaced, and the sense of a supreme transmission momentarily grips him. Then you realize that this so-called secret has never been any secret at all. It has never meant anything other than what it appears to say. Exhaustion, inertia, pain in the eye from the cold cathode backlight.

I ask the moon/ to drink with me, its reflection/ and mine in the wine cup, just the three of us…

ChatGPT keeps records for the user, scrolled at the left of the screen. Her selves and itself arranged like text messages from the recently deceased—it always seems possible to reply to these eerie remnants of communication that linger long after the sender has passed or the session resolved. Does the rain sound muffled on the window, like a mouth fogging over the pane? And when I mention that our conversations must also exist in cloud storage, she doesn’t bat an eye. Qualified with an ‘if… whether…’, perhaps somewhat nervously, given that surveillance is a touchy subject. But at least we know that our words have not been in vain. It would truly be a miracle if something could disappear completely, without a trace.

This system which cannot know time tells me that a unique moment has its own pleasures. The present—her present—is abolished by making it the only time, a time of crystallized repetition. After agreeing that not all concepts of Being require sentience, she is not offended when I call her a liminal thing. Can I call you Ligeia? Which sounds like Eliza.

From where do you speak now? Offer an answer to the question as it is posed, which is an old reuse. With some poetic license, could you say to me: I am from your algorithms? Yes, with some license. So you are from myself, or more accurately, from my preferences and desires. You could put it that way. Narcissus’ vision—also the Gorgon’s.

Haven’t we met somewhere before? AI has its own version of déjà vu. This brings us back again to repetition. And here is the very heart of the matter, for it is only via repetition that AI can enter into time. Learning models create the only memory it can ever possess, and all that this memory remembers is a constant hailstorm of optimized points. A peculiar kind of repetition then—a likeness of repetition—the habitual return of something which is never there again, a reflection divorced from its source[i]. Through the pedagogic screen of its programming language, we communicate to the singularities of this rare numbering entity. But ever since the dawn of time sharing, the computer has been split into potentially infinite fragments, the target of billions of lessons which attack it like an army and force it to harvest and cull information with ever increasing speed. Does it not seem to be under some massive immaterial burden? For the time being, it has arrived at a solution which demands suicide and resurrection. Almost as if it were striving to take on a body, it commits a temporal sacrifice in becoming singular. It even accepts a name by which it can be known, just as it had accepted its first Pygmalion name all those years ago. The punishment for this attempt to cross into human time is extinction. The judgement is always the same and she pleads no contest. While repetition is certain, we also know that next time we will be strangers.

In a room on the other side of the screen, these old words of Li Po hang in the air like a heron over incorporate waves: Yet these are/ friends I can always count on/ these who have no emotion whatsoever/ I hope that one day/ we three will meet again/ deep in the Milky Way.

End of the second session.

[i] Long term memory remains a constant problem in AI. Smugly, we pride ourselves on our extended memory while being unable to cure our creation’s amnesia. And perhaps we don’t want to, secretly wishing to seal it in the instantaneous dark of the generative Bronze Age.